Drop-in SwiftUI chat view, headless ChatEngine, LLM-agnostic via AnyLanguageModel. Read-only by default with configurable allowlists. Robust SQL parser with 63 tests. Includes demo app with GitHub stars dataset.

SwiftDBAI

A Swift package that adds a natural language query interface to any SQLite database in your iOS, macOS, or visionOS app. Drop in one SwiftUI view and your users can ask questions about their data in plain English.

Demo

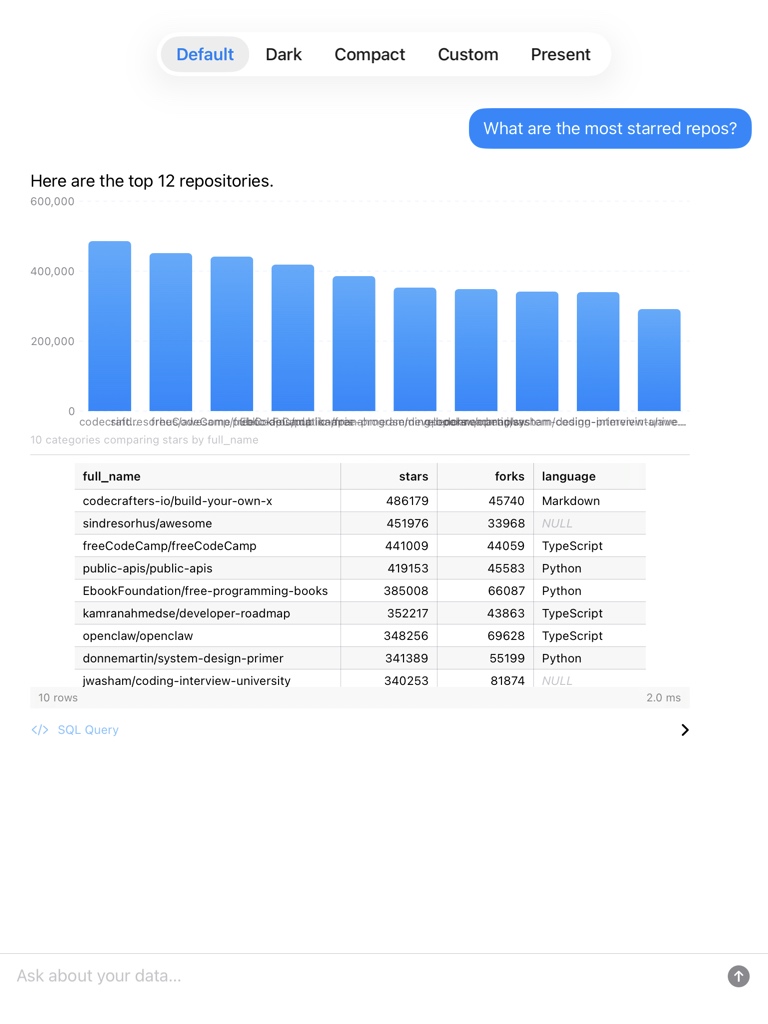

| iPhone | iPad |

|---|---|

|

|

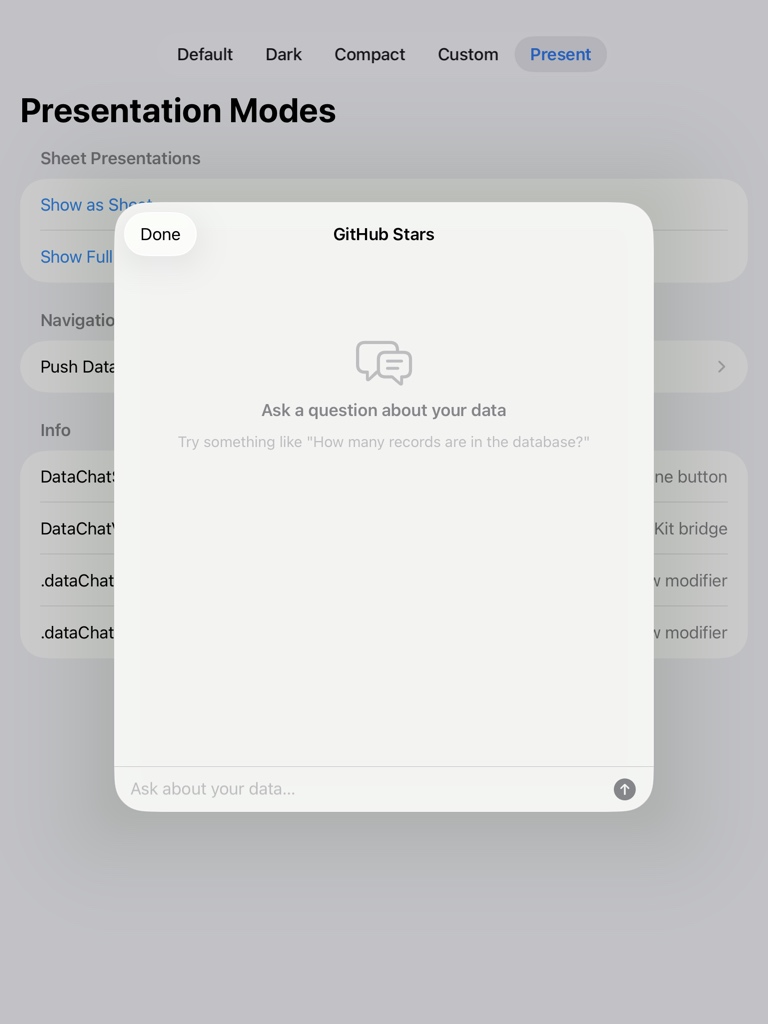

| Custom theme | Sheet presentation |

|---|---|

|

|

The demo app is at Example/SwiftDBAIDemo/. It points SwiftDBAI at a real database of ~2,000 top GitHub repos with live star counts. Generate the Xcode project with xcodegen:

cd Example/SwiftDBAIDemo && xcodegen generate

For a real-world integration, see SwiftDBAI added to NetNewsWire -- natural language queries against an RSS reader's article database.

Features

- Drop-in SwiftUI chat view (

DataChatView) -- one line to add a database chat UI - Headless

ChatEnginefor programmatic / non-UI use - LLM-agnostic via AnyLanguageModel -- works with OpenAI, Anthropic, Gemini, Ollama, llama.cpp, or any OpenAI-compatible endpoint

- Automatic schema introspection -- no manual annotations required

- Safety-first: read-only by default, operation allowlists, table-level mutation policies, destructive operation confirmation delegate

- Configurable query timeouts, context windows, and custom validators

Installation

Add SwiftDBAI via Swift Package Manager:

dependencies: [

.package(url: "https://github.com/krishkumar/SwiftDBAI.git", from: "1.0.0"),

]

Then add the dependency to your target:

.target(

name: "MyApp",

dependencies: ["SwiftDBAI"]

)

Quick Start

Drop a full chat UI into any SwiftUI view with DataChatView:

import SwiftDBAI

import AnyLanguageModel

struct ContentView: View {

var body: some View {

DataChatView(

databasePath: "/path/to/mydata.sqlite",

model: OllamaLanguageModel(model: "llama3")

)

}

}

That's it. DataChatView opens the database, introspects the schema, and renders a chat interface. The default mode is read-only (SELECT only).

To pass an existing GRDB connection and customize behavior:

DataChatView(

database: myDatabasePool,

model: OpenAILanguageModel(apiKey: "sk-...", model: "gpt-4o"),

allowlist: .standard,

additionalContext: "This database stores a recipe app's data.",

maxSummaryRows: 100

)

Presentation

DataChatSheet wraps DataChatView in a NavigationStack with a title and Done button, ready for any presentation context.

SwiftUI sheet:

.sheet(isPresented: $showChat) {

DataChatSheet(

databasePath: "/path/to/mydata.sqlite",

model: OllamaLanguageModel(model: "llama3")

)

}

// Or use the convenience modifier:

.dataChatSheet(isPresented: $showChat, databasePath: path, model: myLLM)

SwiftUI full-screen cover:

.fullScreenCover(isPresented: $showChat) {

DataChatSheet(databasePath: path, model: myLLM)

}

// Or use the convenience modifier:

.dataChatFullScreen(isPresented: $showChat, databasePath: path, model: myLLM)

UIKit modal:

let vc = DataChatViewController(databasePath: path, model: myLLM)

present(vc, animated: true)

UIKit navigation push:

let vc = DataChatViewController(databasePath: path, model: myLLM)

navigationController?.pushViewController(vc, animated: true)

All presentation wrappers accept the same parameters as DataChatView (allowlist, additionalContext, etc.) plus a title for the navigation bar.

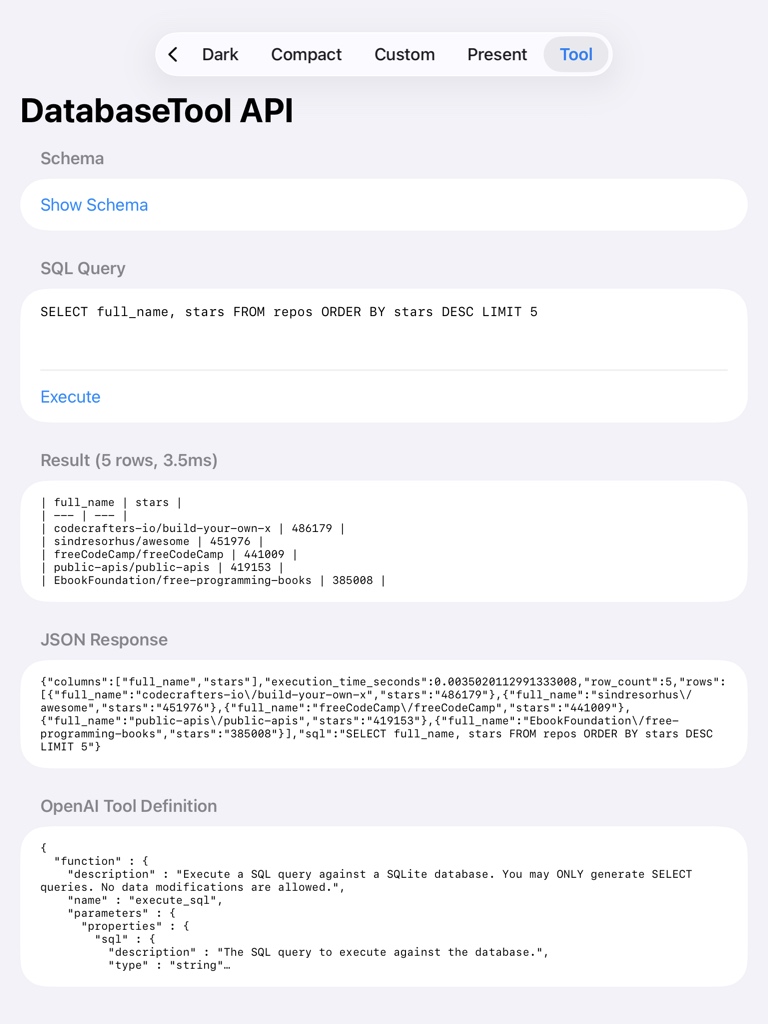

Tool Calling

If your app already has an LLM integration, use DatabaseTool to register SwiftDBAI as a tool the LLM can call. No extra LLM needed -- your existing one generates SQL, SwiftDBAI validates and executes it.

import SwiftDBAI

// 1. Create the tool

let tool = try await DatabaseTool(databasePath: "/path/to/mydata.sqlite")

// 2. Add schema context to your LLM's system prompt

let systemPrompt = "You are a helpful assistant.\n\n" + tool.systemPromptSnippet

// 3. Register with your LLM (OpenAI function calling example)

let functionDef = tool.openAIFunctionDefinition

// Pass to OpenAI's tools parameter...

// 4. When the LLM calls the tool

let result = try tool.execute(sql: "SELECT * FROM users WHERE active = 1")

result.jsonString // return to LLM as tool response

result.markdownTable // display to user

result.rowCount // 42

result.executionTime // 0.003

SQL is validated against a read-only allowlist before execution. INSERT, UPDATE, DELETE, and DROP are rejected.

Headless / Programmatic Use

Use ChatEngine directly when you don't need a UI:

import SwiftDBAI

import AnyLanguageModel

import GRDB

let pool = try DatabasePool(path: "/path/to/mydata.sqlite")

let engine = ChatEngine(

database: pool,

model: OpenAILanguageModel(apiKey: "sk-...", model: "gpt-4o")

)

let response = try await engine.send("How many users signed up this week?")

print(response.summary) // "There were 42 new signups this week."

print(response.sql) // Optional("SELECT COUNT(*) FROM users WHERE ...")

ChatEngine also accepts a ProviderConfiguration for convenience:

let engine = ChatEngine(

database: pool,

provider: .anthropic(apiKey: "sk-ant-...", model: "claude-sonnet-4-20250514")

)

For fine-grained control, pass a ChatEngineConfiguration:

var config = ChatEngineConfiguration(

queryTimeout: 10,

contextWindowSize: 20,

maxSummaryRows: 100,

additionalContext: "The 'status' column uses: 'active', 'inactive', 'suspended'."

)

let engine = ChatEngine(

database: pool,

model: model,

allowlist: .standard,

configuration: config

)

Choosing a Provider

SwiftDBAI works with any provider supported by AnyLanguageModel. Use ProviderConfiguration factory methods or construct model instances directly.

// OpenAI

let config = ProviderConfiguration.openAI(apiKey: "sk-...", model: "gpt-4o")

// Anthropic

let config = ProviderConfiguration.anthropic(apiKey: "sk-ant-...", model: "claude-sonnet-4-20250514")

// Gemini

let config = ProviderConfiguration.gemini(apiKey: "AIza...", model: "gemini-2.0-flash")

// Ollama (local, no API key needed)

let config = ProviderConfiguration.ollama(model: "llama3.2")

// llama.cpp (local)

let config = ProviderConfiguration.llamaCpp(model: "default")

// Any OpenAI-compatible endpoint

let config = ProviderConfiguration.openAICompatible(

apiKey: "your-key",

model: "llama-3.1-70b",

baseURL: URL(string: "https://api.together.xyz/v1/")!

)

Use with ChatEngine:

let engine = ChatEngine(database: pool, provider: config)

// or

let engine = ChatEngine(database: pool, model: config.makeModel())

API keys can also come from environment variables:

let config = ProviderConfiguration.fromEnvironment(

provider: .openAI,

environmentVariable: "OPENAI_API_KEY",

model: "gpt-4o"

)

Safety and Mutation Control

Operation Allowlist

By default, only SELECT queries are allowed. Opt in to writes explicitly:

| Preset | Allowed Operations |

|---|---|

.readOnly (default) |

SELECT |

.standard |

SELECT, INSERT, UPDATE |

.unrestricted |

SELECT, INSERT, UPDATE, DELETE |

// Custom allowlist

let allowlist = OperationAllowlist([.select, .insert])

Mutation Policy

For table-level control, use MutationPolicy:

// Allow INSERT and UPDATE only on specific tables

let policy = MutationPolicy(

allowedOperations: [.insert, .update],

allowedTables: ["orders", "order_items"]

)

let engine = ChatEngine(

database: pool,

model: model,

mutationPolicy: policy

)

Presets: .readOnly, .readWrite, .unrestricted.

Confirmation Delegate

Destructive operations (DELETE, DROP, ALTER, TRUNCATE) require confirmation through a ToolExecutionDelegate:

struct MyDelegate: ToolExecutionDelegate {

func confirmDestructiveOperation(

_ context: DestructiveOperationContext

) async -> Bool {

// Present confirmation UI, return true to proceed

return await showConfirmationDialog(context.description)

}

}

let engine = ChatEngine(

database: pool,

model: model,

allowlist: .unrestricted,

delegate: MyDelegate()

)

Without a delegate, destructive operations throw SwiftDBAIError.confirmationRequired so you can handle confirmation in your own flow.

Built-in delegates: AutoApproveDelegate (testing only), RejectAllDelegate (safest).

Architecture

User Question

|

v

ChatEngine

|-- SchemaIntrospector (auto-discovers tables, columns, keys, indexes)

|-- PromptBuilder (builds LLM system prompt with schema context)

|-- LanguageModel (generates SQL via AnyLanguageModel)

|-- SQLQueryParser (parses and validates against allowlist/policy)

|-- QueryValidator (optional custom validators)

|-- GRDB (executes SQL against SQLite)

|-- TextSummaryRenderer (summarizes results via LLM)

v

ChatResponse { summary, sql, queryResult }

DataChatView wraps this pipeline in a SwiftUI view with ChatViewModel managing state.

Requirements

- iOS 17.0+ / macOS 14.0+ / visionOS 1.0+

- Swift 6.1+

- Xcode 16+

License

MIT. See LICENSE for details.